March 9, 2022 – In a disaster, whether it’s an earthquake, flood, or hurricane, every second counts to deliver humanitarian aid to those in need. Civilian rescue and relief teams are under tremendous time pressure to navigate the devastated areas and help those in need, as we saw in the recent devastating earthquakes that hit Zagreb and Petrinja. To make humanitarian aid more efficient and speed up the rebuilding of stable infrastructures, Oikon has partnered with the Fraunhofer Institute for Industrial Mathematics ITWM in Germany to develop software that uses artificial intelligence (AI) to automatically analyse drone images in real time.

To assess the extent of the disaster, the damage caused, and the number of people affected, post-disaster analysis reporting often uses satellite imagery. However, valuable time passes before the data is made available, processed, and analysed, due to the specificities of satellite remote sensing. Fast in-situ imaging methods are needed for the determination of highly affected areas requiring immediate assistance and possible rescue routes. As a result, relief supplies can be inadvertently sent to almost uninhabited regions, while in other places people wait in vain for life-saving help. Some aid organisations, such as the United Nations World Food Programme (WFP), therefore use unmanned drones to take aerial photos of the crisis area. First responders then sift through hundreds of individual images and stitch them together to create an overall picture. This takes hours, so valuable time is lost before rescue teams arrive at their destination with their equipment and supplies.

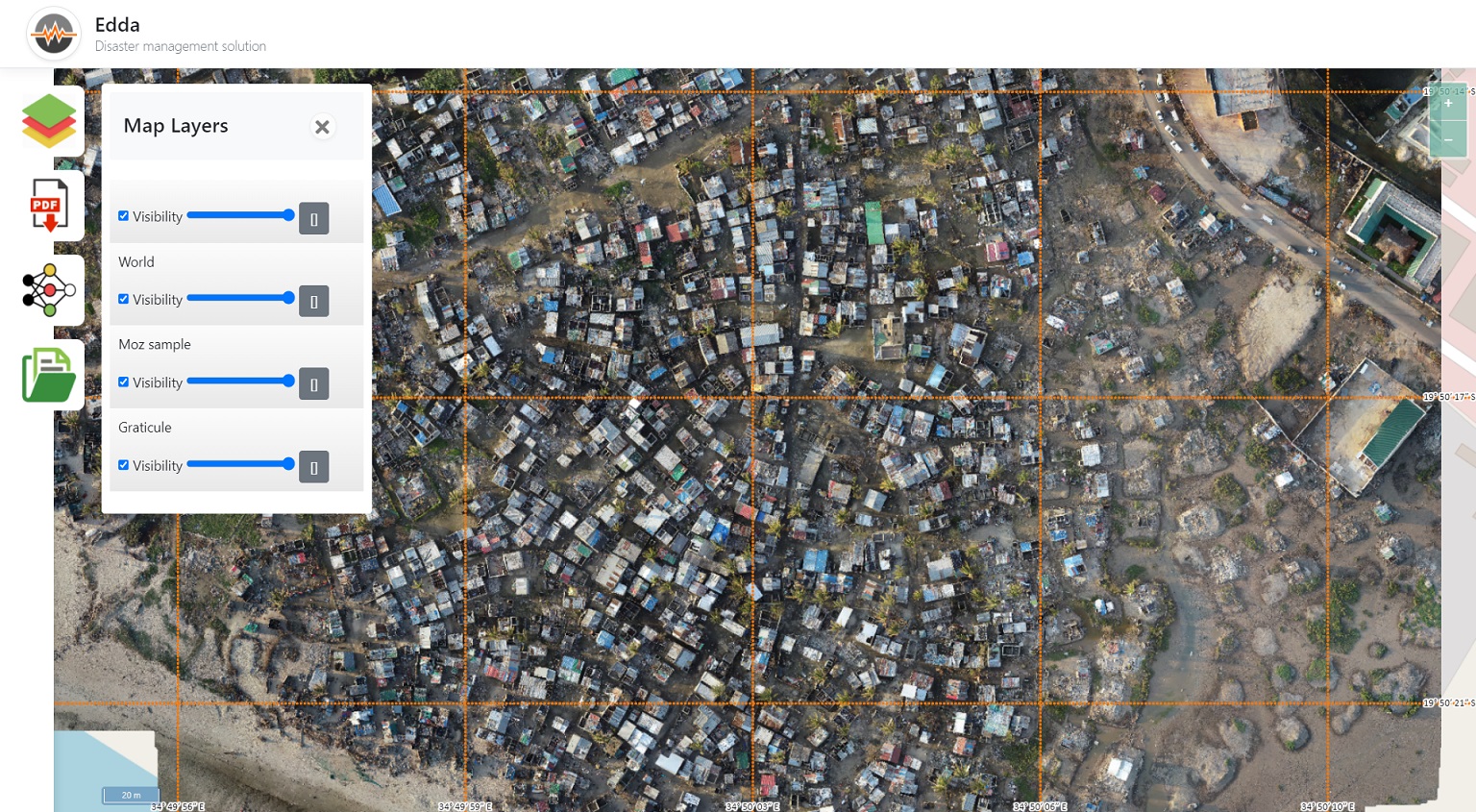

Oikon and Fraunhofer ITWM are jointly developing software capable of processing Earth observation imagery using computer vision and GIS algorithms. This is now helping the scientists to speed up the evaluation of drone images, as Markus Rauhut, Head of the Image Processing Department at Fraunhofer ITWM, explains: “We are developing software that uses artificial intelligence to evaluate drone images automatically and in real time. It is called EDDA (Efficient Humanitarian Assistance through Intelligent Image Analysis). It will work without an Internet connection and can be used on standard notebooks. Therefore, the tool will run even in devastated areas without infrastructure.”

The fully automated analysis consists of image processing algorithms and GIS transformations in combination with two deep learning models, a building segmentation model and a building damage classification model. The building segmentation model is an FPN network with an Efficientnet-b2 backbone trained on a combination of data from the Open Cities AI Challenge and the SpaceNet 4: Off-Nadir Buildings datasets. Building damage classification is predicted using a VGG 16 network trained on data acquired and annotated during a WFP remote sensing workshop in Mozambique. These models are now being enhanced and integrated into software enabling fast and easy use. To achieve this, the EDDA software solution utilizes image pre-processing, GIS post-processing and data visualisation.

Additionally, what makes the EDDA software unique is the way in which trained models can be incorporated. By defining a minimal working example and the required inputs, the model can be run through the browser-based application and the results visualized in a geographic setting, with most post-processing steps already implemented, effectively characterizing the application as modular.

“The goal of the EDDA project is to develop a user-friendly and lightweight system to obtain valuable information from the affected area for logistics planning. The idea is to use drones to capture images of a disaster area and use them to create orthophotos as input for the EDDA application, which could then detect destroyed infrastructure and provide an estimate of how many people are at risk, determine high-risk areas based on property damage, and display them on a map. This is very useful for rescue and relief workers to select priority areas and plan their logistics,” explains Branimir Radun, Head of the Natural Resource Management Department at Oikon.

The Fraunhofer team has been analysing and adjusting the image processing algorithms, while the Oikon team has developed the application that runs the algorithms. All developments have been communicated and tested with the World Food Programme that has also participated in the algorithm design process.

The prototype is ready and in the testing phase. Currently, it provides reliable information about the condition of buildings in a disaster area and future development is aimed towards expanding the analysis to other infrastructure elements such as roads and bridges. The software is expected to be ready for use by the end of this year.

The EDDA project is supported by the Fraunhofer Foundation and as a CSR project, it is not commercial in nature. The software will be donated to the World Food Programme and several other emergency response teams and aid organisations around the world.